In April 2026, Cloudflare announced Agent Memory during their first Agents Week. The pitch: stop building memory infrastructure, start consuming it. A managed service that extracts information from agent conversations, stores it persistently, and retrieves what's relevant on demand.

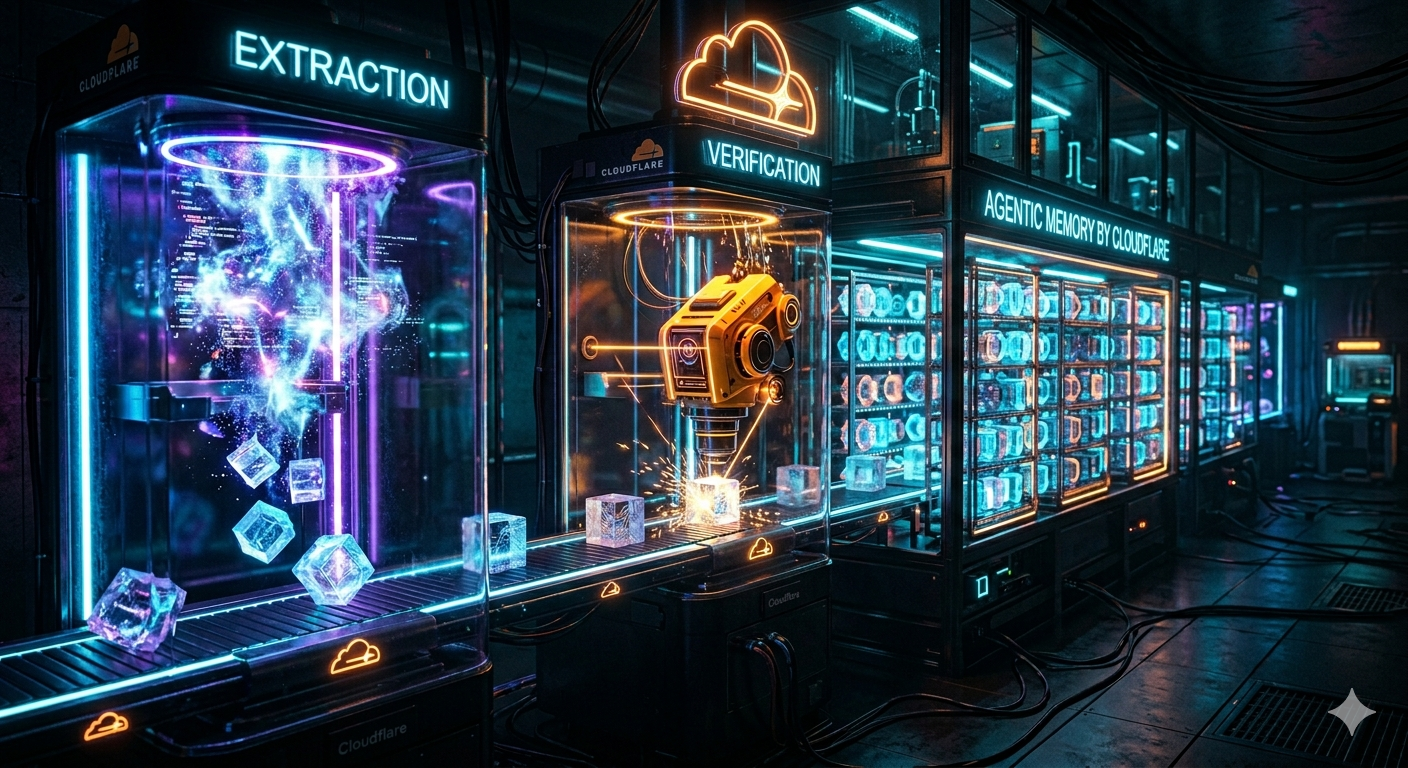

This isn't a vector database with a wrapper. It's a full extraction-verification-retrieval pipeline that runs on Cloudflare's edge. And it signals where the industry is heading: memory as a managed primitive, not a DIY project.

The Problem: Context Rot at Scale

Even as context windows grow past 1M tokens, stuffing everything in doesn't work.

Cloudflare's engineering team frames it as "context rot" — quality degrades the more you pack in. You face two bad options: keep everything and watch output quality decline, or aggressively prune and lose information the agent needs later.

This tension gets worse for long-running agents. A coding agent that runs for weeks against a real codebase. A support agent that handles thousands of tickets. A research agent that accumulates findings over months. These agents need memory that stays useful as it grows, not memory that performs well on a clean benchmark dataset.

The managed memory market has been heating up: Mem0, Zep, Letta, LangMem. But Cloudflare's entry is different. They're not a memory startup — they're infrastructure. When Cloudflare ships a primitive, it tends to become the default for teams already on their platform.

Agent Memory isn't "store embeddings, query embeddings." It's a multi-stage pipeline with explicit verification.

Ingestion Pipeline

When a conversation arrives for storage, it passes through four stages:

1. Content addressing. Every message gets a SHA-256 hash as its ID. Re-ingesting the same conversation never double-writes. If an agent restarts and replays history, ingestion stays idempotent. This is the foundation for reliable memory at scale.

2. Two-pass extraction. The Full pass chunks messages at roughly 10K characters and extracts summary-style memories. Up to four chunks run in parallel. But summaries alone lose concrete details — "ran on version 2.3.1" becomes "ran the software." So a Detail pass fires on longer conversations (9+ messages), specifically targeting names, prices, version numbers, entity attributes. The two result sets merge.

3. Eight-point verification. Each extracted memory gets verified against the source transcript. The verifier checks: entity identity, object identity, location context, temporal accuracy, organizational context, completeness, relational context, and whether inferred facts are actually supported by the conversation. Items get passed, corrected, or dropped.

4. Four-type classification. Verified memories are classified into: Facts (persistent truths), Events (things that happened), Instructions (workflows, preferences), and Tasks (action items). Facts and instructions are keyed by normalized topic — new memories supersede rather than delete old ones.

This is more sophistication than most teams build themselves. The verification stage alone catches a class of hallucinations that naive extraction misses: the model confidently extracting "facts" that were never actually stated.

Retrieval: Five Channels, One Answer

The retrieval side is where Agent Memory gets interesting. Five search channels run in parallel:

| Channel | What It Catches |

|---|

| Full-text search | Porter stemming for keyword precision — when you know the exact term |

| Fact-key lookup | Direct match on normalized topic keys |

| Raw message search | Safety net for verbatim details the extraction pipeline generalized away |

| Direct vector search | Query embedding finds semantic neighbors |

| HyDE vector search | Generates a hypothetical answer, embeds that — catches vocabulary mismatches |

The results merge via Reciprocal Rank Fusion (RRF). Fact-key matches carry the heaviest weight. Ties break toward newer entries.

The HyDE channel is the clever one. Standard vector search embeds the question and finds similar memories. But what if the question and answer use completely different vocabulary? "What package manager does the team prefer?" might not embed close to a memory that says "always use pnpm for this project." HyDE generates what the answer would look like, then searches for memories similar to that hypothetical answer. It catches multi-hop queries that direct embedding misses.

The raw message search is the safety net. Extraction pipelines generalize — they turn specific details into summaries. Raw message search queries the stored conversation directly, catching verbatim details that extraction smoothed over.

Cloudflare defaults to Llama 4 Scout (17B MoE) for extraction and classification. Only the final synthesis step — composing a natural-language answer from retrieved memories — uses the larger Nemotron 3 (120B MoE).

The reasoning: larger models only helped at synthesis. Extraction and classification are more constrained tasks where the smaller model performs equivalently. This keeps costs down while reserving capacity for where it matters.

Time arithmetic is handled separately. Relative times like "the thing from two weeks ago" get computed by regex and arithmetic, not the LLM. Models drift on temporal math. Separate path, exact computation.

What Cloudflare Gets Right

Entity-scoped by default. Memory is namespaced by agent ID and entity ID (user, session, or tenant). You don't have to design the partitioning — the API does it. The latency profile is tuned for "fetch this user's memory at the start of every turn."

Shared memory profiles. A memory profile doesn't have to belong to a single user. Teams can share a profile — knowledge learned by one engineer's coding agent is available to everyone. Cloudflare is already using this internally: an agentic code reviewer learned to stay quiet when a specific pattern had been flagged previously and the author chose to keep it.

Hybrid storage collapsed. Each memory item carries a key, a value, optional structured metadata, and an embedding. You can query by exact key, by metadata filter, or by semantic similarity. This collapses the typical "I have a key-value store and a vector store and they're out of sync" problem into a single API.

Workers-native latency. Reads from a Worker in the same region as the memory store hit single-digit milliseconds. This matters because agent memory is on the hot path of every turn. Fifty milliseconds of retrieval latency compounds across a conversation.

Data portability. "Agent Memory is a managed service, but your data is yours. Every memory is exportable." Cloudflare commits to letting the knowledge your agents accumulate leave with you if your needs change. This is table stakes for enterprise adoption.

The Tradeoffs

Kristopher Dunham from Cloudflare highlighted the honest constraints:

Vendor lock-in. This is Cloudflare infrastructure. If you're not already on Workers, you're adding a dependency. The REST API works from anywhere, but you're paying for cross-network latency.

Extraction quality depends on models you don't control. The extraction pipeline uses Cloudflare's chosen models with Cloudflare's prompts. If extraction misses something important, you can't tune it.

Automatic ingestion isn't magic. Dunham recommends using the explicit remember tool for critical facts rather than relying on automatic extraction. The pipeline is good, but explicit beats implicit for information that must not be lost.

Pricing is unknown. Still in private beta, no pricing announced. Memory services can get expensive at scale — Mem0's managed tier charges per operation, which adds up with high-frequency interactions.

Six Problems, Partial Solutions

We track six critical problems any serious memory system must solve:

- Relationship — facts connect to other facts

- Temporal — what was true yesterday may not be true today

- Consolidation — contradictions must be resolved, knowledge must merge

- Decay — memory that only grows drowns in noise

- Abstention — knowing when you don't know

- Verification — tracing where any answer came from

Agent Memory demonstrates relationship through its typed classification and fact-key linking. Temporal gets partial treatment — dates get resolved from relative to absolute during extraction, and newer memories supersede older ones. Consolidation happens during extraction (deduplication, superseding) but there's no explicit background consolidation like GBrain's dream cycle.

The gaps: Decay is implicit (superseding old facts) but there's no principled forgetting mechanism. Abstention is unaddressed — the system returns what it finds, it doesn't know what it doesn't know. Verification provides source provenance (line indices in transcripts) but not answer-level citation guarantees.

This is typical of managed services: they solve the common cases well, but edge cases require building on top rather than customizing underneath.

Key Takeaways

-

Memory is becoming infrastructure. Cloudflare shipping a managed memory primitive signals that this is no longer a differentiating feature to build yourself — it's table stakes to consume.

-

Extraction pipelines need verification. The eight-point verification stage catches hallucinated facts that naive extraction misses. If you're building your own, add a verification pass.

-

Five retrieval channels beat one. Full-text, fact-key, raw message, direct vector, and HyDE each catch different query types. RRF fusion combines them. One channel alone leaves gaps.

-

Shared memory enables team knowledge. Memory profiles that span users let institutional knowledge accumulate. What one engineer's agent learns, everyone's agent knows.

-

Explicit beats automatic for critical facts. Don't rely solely on extraction pipelines for information that must be remembered. Use explicit write operations.

Agent Memory is in private beta. If you're building agents on Cloudflare, the waitlist is open. For everyone else, the architecture is worth studying — it's a preview of what managed memory looks like when a platform company takes it seriously.

Try Hypabase Memory — memory infrastructure that addresses all six problems with hypergraph-native storage.

Related: Karpathy's LLM Wiki: Why the Future of AI Memory Isn't RAG | Garry Tan's GBrain: The Memex We Were Promised | KAIROS and the End of Stateless Agents